HEVC: The Path to Better Pictures

How to migrate from the interlaced origins of television to the progressive future of high quality, ultra high definition media

Digital video has to be compressed to be used. In particular, if we are to deliver it to the home – and let’s face it, there is not a lot of point in making television if we do not deliver it to the home – then it has to be in a bitstream that can be transmitted economically.

To make a good job of compressing video, we need to be careful we only throw away the information that viewers will not miss. Today we are developing appetites for ever-larger raw files: 3D, 4k and even 8k video. So we need to find ways to compress video ever more efficiently.

The next big thing would appear to be HEVC, the high efficiency video codec. This paper looks briefly at the history of encoding, sets the scene for HEVC, and considers how it might be implemented.

It also considers the subject of interlacing. The overwhelming majority of the world’s TV content is interlaced, but HEVC has no interlace modes. Is this crazy or the best decision ever, when nearly every camera and every display device is based on progressive scanning technology? And if it is the best decision ever, how can companies like AmberFin help you migrate from the interlaced origins of television to the progressive future of high quality, ultra high definition media?

A brief history of encoding

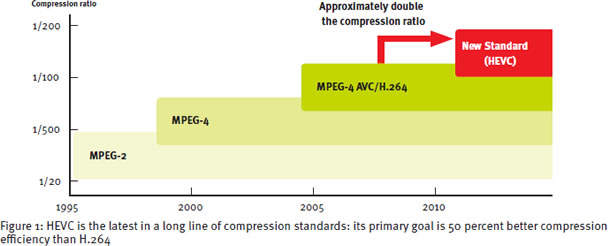

HEVC is the latest in a long line of compression standards. In fact, the practicalities of taking a video signal, digitizing it and using mathematical transforms to compress it into a file dates back more than 40 years.

In the 1980s a number of manufacturers were keen to develop professional digital products – effects units as well as recorders – and interest focused around the discrete cosine transform as the most likely mathematical process to achieve best results. Ampex even called its digital recording format the DCT.

At around the same time the Moving Picture Experts Group (MPEG), the successor to the Joint Photographic Experts Group (JPEG) was being convened for the first time. The MPEG-1 standard was published in the late 1980s. This was optimized for a specific task: recording video on the existing audio CD standard, although it found a more pervasive application in a new medium called the internet.

MPEG-1 was followed by MPEG-2, which used the same fundamental techniques but with the aim of delivering consistently high quality pictures for broadcast.

The work was to make digital cable and satellite broadcasting possible, and led directly to the multichannel revolution.

The project to develop MPEG-4 had a single aim: to double the compression rates of MPEG-2 and thereby get HD down to manageable bitrates. This would allow multiple HD channels to be delivered in a single multiplex and allow for the widespread rollout of HD.

The original project was not successful in hitting its goal. The MPEG organization joined forces with the Video Coding Experts Group from ITU, the International Telecommunications Union, which was also working on the same challenge. The result was MPEG- 4 part 10, or the Advanced Video Codec (if you are from MPEG), or H.264 (the ITU standard), first published in May 2003.

MPEG-2 was published in 1996, so seven years had passed before the publication of MPEG-4, during which time Moore’s Law had been delivering its routine doubling of density of processing components.

This was good, because while MPEG-4 achieved its goal of doubling the compression for equivalent picture quality, it took perhaps four times as much processing power to do it.

The aims of HEVC

AVC/H.264 is now widely used for broadcast transmission and for other applications including security, internet delivery, mobile television, Blu-ray discs, video-conferencing, telepresence and more. It can be regarded as a success today.

But technology moves on, and the need for an even more efficient codec has become pressing. First and foremost, there is a lot more video out there that needs handling, transporting and storing. The ubiquity of HD – phones and low-cost cameras like the Go- Pro now shoot in HD – means that we are all keen to take advantage of the quality, which we can watch on tablets and computers as well as the large television in the living room.

We also have to recognize that, having got good quality HD on air, engineers now want to take it to the next level, so there are even bigger compression challenges on the near horizon.

Finally, we have new processing architectures available, not least the relative ease of building parallel processing systems, which bring much more power to bear on the task. This means we can consider more challenging algorithms without worry.

So the same dream team has now re-formed, and with a repetition of the earlier goal: this time we want to double the compression rates of MPEG- 4/AVC/H.264. This calls for another step change in compression efficiency, so the new standard is called HEVC for High Efficiency Video Codec, or H.265 in ITUspeak.

HEVC will no doubt be used in time to increase the numbers of HD channels that can be packed into a transport stream, and it will reduce the payload for non-linear delivery of HD files. It also includes specific functionality to deliver 3D to the home, should that ever find consumer interest.

What the MPEG and ITU initiative also promises, though, with HEVC, is the capability of handling future television formats.

These will have bigger spatial resolution. Today there is pressure to develop 4k television (3840 x 2160, or four times HD resolution), although to be fair much of that pressure has so far come from consumer electronics manufacturers keen to sell us new television sets. The Japanese Super Hi-Vision 8k system (7680 x 4320, 16 times HD) will be a practical proposition around 2020.

Simply to serve these increases in spatial resolution in a reasonable bitrate, of say 10 to 20Mb/s, will be challenge enough for the developers of the HEVC standard and subsequent practical implementations.

But there is another issue: bigger pictures really need to be accompanied by higher frame rates.

High frame rates

Film technology has been with us for more than 100 years, and the movie industry settled back then on 24 pictures a second because that was all that was practically possible with the mechanics of the time. Moving film any faster risked tearing it to shreds – and that is probably true today.

So the visual language of the movies was developed around the 24 fps limitation and it became one of its characteristics. In particular, we accept that when the camera moves it always does so slowly. The shallow depth of field of movie lenses focus our attention on what is important, and if there is movement then the background is out of focus so not a distraction.

When television came along we adapted the frame rate we already had. In most of the world 24 fps became 25 fps, which was then faked to 50 fps using interlacing, a subject I will return to in a moment. In the US, the choice was 30 fps (actually 29.97 for reasons which are so arcane they need not detain us here), or 60 fps with interlace.

Television is not like the movies, though, in that a lot of the things we like to watch are not suited to leisurely camera movements. In particular, we love sport on television, and that implies rapid camera movements to keep up with the action.

In SD this is not a problem as there is so little data in the picture that we are not distracted. In HD we have to be a little more careful, but as resolutions increase then rapid camera movements become very distracting. Today’s demonstration reels for 4k and 8k video formats, if they have camera pans or tracks at all, move at glacial speeds. That is because if you pan quickly in 4k, you make the audience sick.

And that is never good. So there is growing interest in higher frame rates to provide more for the eyes to latch on to and the brain to process, to make the experience more comfortable. For 4k television, the current discussions seem to be around 120 fps or four times the current standard frame rate. This is clearly a North American suggestion, of course: despite the fact that 75% of the world watches 50 fps television, the big money is in the other quarter. So a successful 4k television service, then, is likely to involve four times the spatial resolution of HD and four times the temporal resolution, or 16 times the number of bits that we are transmitting today. Which puts the HEVC challenge into perspective.

Advances in HEVC

From the days of MPEG-1, encoding was much more than a simple mathematical transform of the pixels. It introduced two fundamental new but related technologies:

bi-directional motion compensation, and to provide additional accuracy half-pixel motion estimation. Being able to look forward in time as well as at past pictures ensured the predictive part of the codec was as accurate as possible.

By the time of AVC/H.264, motion compensation interpolation had become much more sophisticated, applying multiple references to further increase accuracy, reduce the bitrate required and provide smooth consistency.

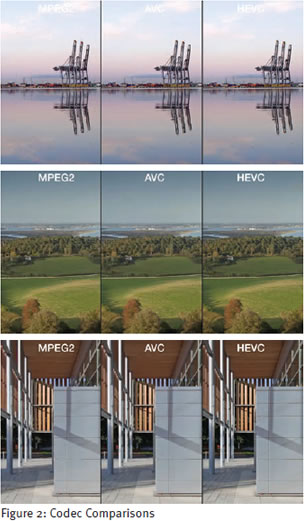

HEVC builds further on this. Despite the temptation to go off into entirely different branches of mathematics to achieve the goals, the design team has sensibly decided to take what we know and develop it further. Advances include improved motion compensated filtering and expanded loop filters for better predictions. HEVC also allows for multiple block sizes for both encoding and motion compensation. All the MPEG family of codecs divide the picture into blocks and process each individually: you have almost certainly seen the blocks when watching an encoder or decoder under stress.

By allowing different block sizes, HEVC improves the coding efficiency at larger spatial resolutions. In a 4k picture the green of the cricket pitch or the blue of the sky will occupy the same proportion of the picture but with very many more pixels than in SD or HD, so being able to dynamically create larger blocks to code regions with few changes in content makes more efficient use of the coding.

Another artifact we have all seen in MPEG-2 and MPEG- 4 is banding: what was a smooth color gradient in life becoming a set of distinct color bands in the encoder.

You often see it in skies, or when inexperienced graphic artists think a color gradient would look nice under the credits. HEVC has internal over-sampling. This creates extra least significant bits to map very small changes in intensity or color. By processing at this level, the codec helps to ensure that subtle changes in color are smooth.

The downside of these additions to the codec is that it requires more computational processing. The current estimate is that HEVC will need two to three times the horsepower of H.264 for the equivalent content.

Again, Moore’s Law is continuing to help, and this additional demand for more complexity with more pixels and more frames should not represent too much of a problem over time.

Interlace is evil

Once more we need to return to the dawn of television history. The whole chain, from camera to home receiver, depended upon valves and diodes: devices that could barely keep up with any sort of video bandwidth. So interlaced scanning was invented to give the illusion of vertical detail within the constraints of the electronics of the time.

Interlace is a simple compression technique (if you are feeling generous) or a fudge (if you are not). The basic trade off is how many vertical lines of television resolution can you achieve for a given frame rate in a given signal bandwidth. Interlace is a compromise to transmit 60 (or 50) pictures a second at half the vertical resolution: all the odd lines in the first picture, then all the even lines in the second. You only need half the bandwidth, you get twice the vertical resolution and you fool the eye into seeing smooth movement at effectively 60 (or 50) frames per second.

Forgive me if I shout, but WE DO NOT NEED TO DO THIS ANY MORE. Modern solid state electronics and digital processing are more than capable of supporting true 50 fps, or indeed 120 fps, or a more international 150fps.

With proper progressive scanning. Without compromise. With each passing year, we also have less and less ability to correctly display interlaced pictures. CRTs are no longer on sale. Plasma, LCD, OLED and the rest are all progressively scanned screens. To show an interlaced television stream on them, they have to convert it internally to progressive scanning. Broadcasters and creative producers have no control over how this will work inside the television or set-top box, and what artifacts it will add to the image.

Many producers take great care over the look of their content. They may choose to shoot in a “film look” mode, at 25 fps. They may choose to shoot 50p to capture fast movement. What they have in common is that they want viewers to see what they created. Converting everything to an interlaced scan just for the hop from the broadcaster to the home means that the way that vision is preserved is entirely dependent upon the deinterlacer in the receiver, a chip likely chosen by the manufacturer solely on the basis of cost.

One of the great advances in the HEVC specification is that it does not support interlace. It is simply not there: all the transmission modes assume progressive scanning. Ding dong, the witch is dead! And yet even now there are people trying to squeeze an interlaced mode into HEVC. They must be resisted.

Encoding interlaced video

When it comes to achieving a high quality, clean encode of video – and in particular high frame rate, high resolution video – then interlacing is a huge distraction. Inevitably it adds noise and reduces quality, because the compromises inherent in it do not work well with the underlying algorithms.

In technical terms, television is a sub-Nyquist system. Mr Nyquist, you will remember, postulated that you need to capture more than twice as many samples as the highest frequency you wish to reproduce. It is why we digitize 20kHz audio with 48kHz sampling. 25 frames a second – even 120 frames a second – are not enough samples to capture the fastest motion we are likely to want to portray on television. This is another reason that 4k showreels are glacially slow landscapes: look how sharp all the pixels are.

Our eye/brain combination does not worry about this. We are good at synthesizing information from limited information. If we catch movement out of the corner of our eye we can pretty much always tell if it is tumbleweed or a tiger, and take the appropriate action.

Even the cleverest of algorithms running on the most powerful encoding platform cannot emulate this intelligence. It just has to take the pixels it is given and perform the transforms. In particular, if something is an artifact caused by the interlace process, it is impossible for the encoder to discriminate between it and real high resolution detail.

Even if your content was created in an interlaced format or restored from an archive in an interlaced format, passing it through a professional de-interlacer such as the AmberFin iCR will at least ensure a clean progressive signal, free from artifacts, going in to the HEVC encoder. The iCR is also the perfect host for a software-based HEVC codec. iCR integrates high quality de-interlacing within a generic transcode platform, and tightly couples transcode to a variety of media QC tools to check quality before delivery. HEVC will be a powerful tool, particularly as we move to higher resolutions and higher frame rates. And we must fight to preserve HEVC as a progressiveonly codec to help rid the world of the evil that is interlace.

Conclusion

We have two pressing reasons to consider another quantum leap in video encoding efficiency.

First, we all want more HD, and we want it over the internet and wireless connectivity. The required massive increase in bandwidth capacity is not going to happen economically, so we have to be more careful with the bandwidth we have. Packing HD into smaller streams is a real requirement if we are to be able to meet the growing demand for high quality content, when and where we want it.

Second, we are now looking beyond HD to even higher quality. That may be brought about through more pixels, through higher frame rates, or best of all through both together. The proposals for ultra-high definition formats create exponentially larger raw files, so we need very high efficiency encoding to keep delivery formats manageable.

The HEVC/H.265 standard addresses these issues, achieving its goal of a 50% reduction in bitrate for equivalent perceived quality. The first version of the standard was approved on 13 April 2013 by ITU, and published in June. Already there is a long list of products that claim HEVC capability, and end-to-end systems have been demonstrated.

AmberFin is among the vendors who are ready for HEVC. The iCR platform ensures clean content entering the encoding process – including from interlaced material – and implements the standard in high quality, high performance software.

• NdR: Este artigo foi publicado na sua língua original pela sua relevância e porque o tema é de vital importância neste momento para a indústria que está estabelecendo os padrões para a tecnologia 4K. Este artigo foi desenvolvido na Amberfin Academy estabelecimento educacional onde a Amberfin desenvolve projetos de pesquisa e inovação. Para mais informações, acesse www.amberfin.com/academy